Why AI Isn’t Disrupting the Design Process in AEC (Yet)

If you’ve been on LinkedIn any time in the last two years, you’ve seen the headlines: “AI will replace architects.” “Generative AI is transforming construction.” “The end of CAD as we know it.”

Meanwhile, if you actually walk into an architecture studio or an engineering firm today, you’ll find the same Revit models, the same Rhino files, the same Grasshopper definitions, and the same late nights coordinating clashes before a deadline.

The drawings still get stamped by humans. The details still get drawn by humans. The models still get built by humans.

So what’s going on? Why does an industry that builds roughly 13% of global GDP feel almost untouched by the AI revolution that has upended software engineering, marketing, and customer support in the span of 24 months?

At e-verse, we build software for the AEC industry, and we spend our days at the exact intersection where large language models meet building design.

This gives us a fairly unromantic view of what AI can and cannot do in our field. Here’s the honest picture.

AI in AEC: The short version

AI has not disrupted the AEC design process because the current dominant form of AI — large language models — was built to predict the next plausible word in a sequence.

Buildings are not sequences of plausible words. They are precise, regulated, physically buildable, three-dimensional, parametric, coordinated systems where a 2 cm mistake can mean a failed inspection, a lawsuit, or a collapsed slab.

The gap between “plausible” and “correct” is where our entire industry lives.

And that gap is exactly where today’s AI is weakest and it explains why parts of the industry like rendering are completly being disrupted and others seem to b untouched.

Reason 1: LLMs are not precise, and AEC is a precision industry

Let’s start with the most fundamental problem. Large language models are probabilistic. They generate outputs that are statistically likely given the input, not outputs that are verifiably true.

This is not a bug that will be patched in the next release. A growing body of research argues that hallucinations stem from the fundamental mathematical and logical structure of LLMs, drawing on computational theory and Gödel’s First Incompleteness Theorem to show that every stage of the process — from training to retrieval to generation — has a non-zero probability of producing a hallucination.

In plain terms: the technology will always be capable of confidently making things up.

In a chat interface, that means the occasional wrong date or a fabricated citation.

In architecture, it means a stair with 17 risers where the code requires 16, a fire-rated wall that doesn’t actually reach the slab above, a beam sized for the wrong load case, or a parking stall 10 cm narrower than the local minimum.

These are not charming quirks. These are the kinds of errors that fail code review, blow schedules, and end careers.

There’s a second precision problem that gets less attention: LLMs are bad at numerical reasoning in general.

They don’t actually “calculate”; they generate text that looks like calculation. If you ask an LLM to verify that a 12-meter simply supported steel beam can carry a 25 kN/m distributed load, you’ll get a fluent, confident answer that may or may not be structurally correct.

For anything life-safety related — which is essentially all of structural and a great deal of MEP — this is not acceptable as a primary design tool. At best, it’s a junior assistant whose homework you always have to check.

Reason 2: LLMs cannot generate a parametric 3D building

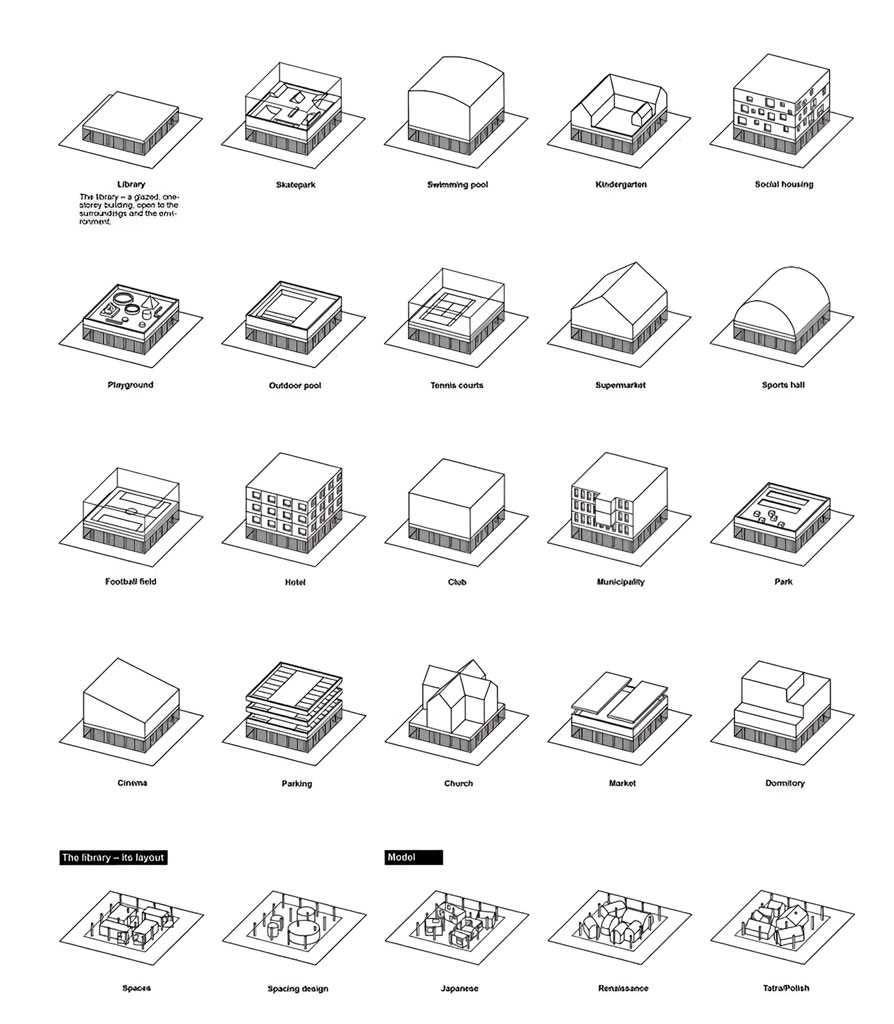

This is the one that most surprises people outside the industry. When someone sees Midjourney produce a stunning exterior render, it’s easy to assume we’re five minutes away from typing “four-story office building, Zaha Hadid style, 2,400 m²” and getting a Revit model back.

We aren’t close. Here’s why.

A building in the professional sense is not an image or a mesh. It’s a parametric model, a graph of relationships between walls, slabs, columns, openings, systems, and families, each carrying dimensions, materials, fire ratings, acoustic properties, structural properties, and manufacturer data.

When you move a wall in Revit, dozens of dependent elements re-coordinate themselves because they were never really “walls”, they were rule-bound objects in a database with a 3D representation attached.

LLMs work with text tokens. They don’t have a native representation of 3D space, they don’t understand spatial relationships as geometry, and they don’t “see” a building model holistically.

Current language models don’t ingest a live Revit model’s 3D geometry; they rely on the data you provide (schedules, parameters, or snapshots).

The AI isn’t literally visualizing the building in 3D — it’s interpreting textual or 2D inputs about it, which means tasks like “find all areas of the model that are hard to access” or “generate an optimized structural grid layout” remain very challenging without explicit algorithms or additional tools.

Add to that the fact that BIM projects contain thousands of elements and parameters, far exceeding the context window of any current LLM.

You cannot simply paste a project into a chat. Even if you could, the model doesn’t know what a wall “means” in a structural sense versus an acoustic sense versus a code sense.

The research community has tried to bridge this. Frameworks like Text2BIM orchestrate multiple LLM agents to convert natural language instructions into imperative code that calls BIM authoring APIs, generating editable models with internal layouts and external envelopes. These are genuinely clever systems — but the heavy lifting is done by the tool functions and APIs, not by the language model itself.

The LLM is a translator sitting on top of a traditional parametric engine. Without that engine, it produces nothing.

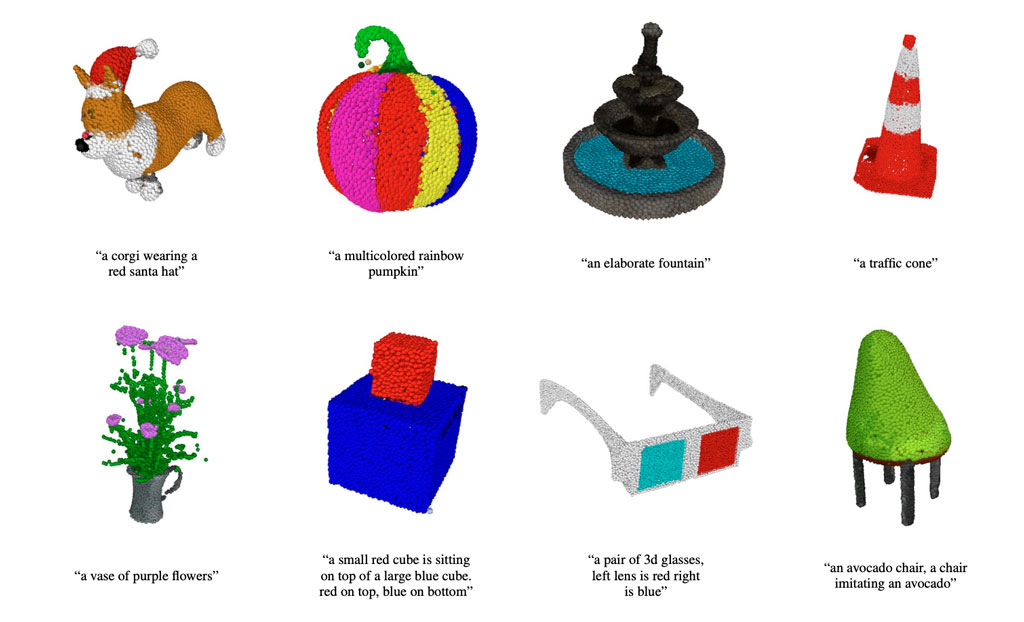

The closer we are to 3D in AI are models like Point-E by openAI and it is not even complex 3D, after that you have to add the parametric complexity so we are still far.

Reason 3: LLMs cannot reliably generate 2D construction drawings either

You’d think 2D would be easier. It isn’t. Construction documents are not pictures of buildings — they are a legal, technical communication standard.

A section drawing contains a specific hierarchy of line weights, annotation families, reference bubbles, dimension strings, hatching conventions, and notes whose formatting is governed by company standards, national standards, and client requirements.

Every line is there because someone on site needs it to build something correctly.

Diffusion models like those behind Midjourney and DALL·E can produce images that look like architectural drawings.

They cannot produce drawings that coordinate with a model, scale correctly, reference the right details, or pass a QA/QC review.

They are, fundamentally, 2D image-generating tools with a limited understanding of architectural function and spatial organization — which is why they generate visually pleasing but spatially unrealistic images.

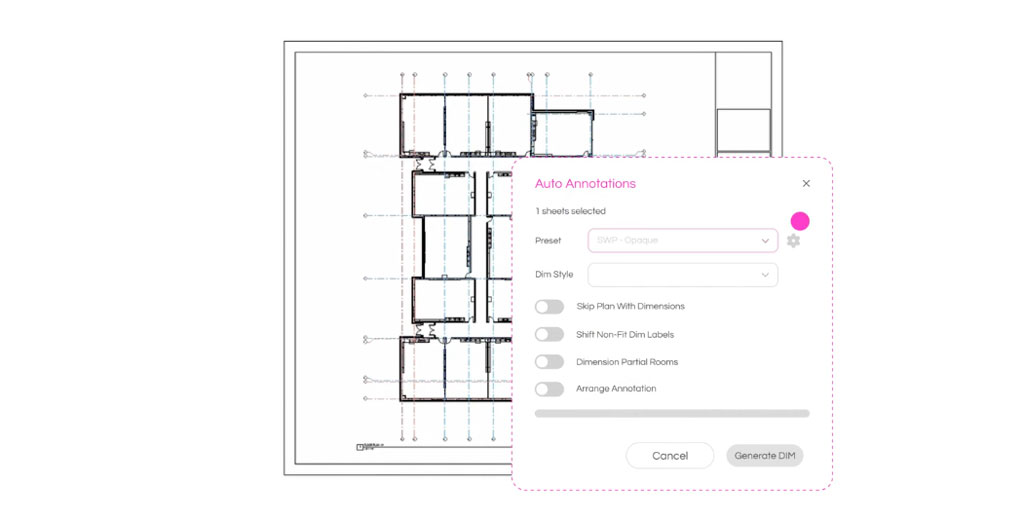

SWAPP, one of the more ambitious startups in this area, is specifically attacking construction document automation — feeding a 3D model and generating drawing sets, sections, elevations, door schedules, and finish schedules.

Even then, the intelligence comes from hard-coded drawing conventions and model analysis, not from a language model freely “drawing” the way a human does. And the outputs still require human review and correction before they’re issued.

One of the SWAPP features to help you annotate drawings with AI, while a great process still far away from generating a complete ready to stamp 2D Drawing.

Reason 4: Buildings have to actually stand up, pass code, and get built

This is the deepest reason AI has not disrupted AEC, and it’s cultural as much as technical.

In most software industries, a bug is annoying. In AEC, a bug kills people.

The industry has spent a century building codes, permits, stamps, insurance, and liability structures specifically to ensure that the people signing off on a design are accountable humans with professional licenses.

No LLM is going to get a PE stamp any time soon. No municipality is going to accept “ChatGPT said so” as a compliance argument.

Generative AI in architecture must incorporate regulatory codes and topological constraints to ensure actual buildability — an area current research admits is not deeply addressed.

Even the most promising research on LLM-driven code compliance checking requires well-structured BIM models with precise metadata and still flags the need for manual correction of errors.

Until AI can be held accountable for its outputs — technically, legally, and professionally — it will remain an assistant, not a replacement.

Reason 5: Every project is bespoke

Software engineering got disrupted fast because a huge fraction of code is repetitive: CRUD endpoints, React components, database migrations, test scaffolding.

LLMs were trained on enormous public code corpora and can produce passable versions of patterns they’ve seen a million times.

Architecture is the opposite. Every site is different. Every client is different. Every program, climate, zoning code, soil condition, and construction market is different.

Generative AI tools currently face real limitations in addressing complex, bespoke, or customized designs — exactly the kind of work that defines most architectural practice.

The training data simply doesn’t exist at the scale and quality needed. There is no GitHub for buildings. There is no Stack Overflow for structural calculations.

The IP is locked in proprietary firm libraries, and most of it was never digitized as structured data to begin with.

So where IS AI actually working in AEC?

Let’s be fair — it’s not that nothing is happening. It’s that what’s happening is narrower, more augmentative, and less disruptive than the hype suggests.

A 2024/2025 survey of 1,227 architecture professionals by Architizer and Chaos found that 46% already use AI tools in their projects, with another 24% planning to start soon.

The generative AI in architecture market was valued at $1.48 billion in 2025 and is projected to reach $5.85 billion by 2029, growing at a 41.1% CAGR, and venture capital investment in AEC-focused AI startups hit $4.2 billion in 2024, up from $1.8 billion in 2022.

That’s real money, and it’s going to real products. Here’s a non-exhaustive map of who’s doing what:

- Autodesk Forma (formerly Spacemaker) is the most mature early-stage site and massing platform. It runs environmental simulations — sun, wind, noise, embodied carbon — and now connects to Revit, Rhino, and third-party plugins like TestFit. It’s excellent for feasibility but outputs still require refinement in detailed design.

- TestFit is a rule-based real-time generator for site layouts, particularly multifamily, student housing, and parking-heavy developments. Despite often being called “AI,” it’s more accurately a very fast constraint solver — it can produce 3,000 valid site plans in under 10 seconds on a standard laptop. That’s the honest version of “AI feasibility.”

- Hypar, founded by ex-Revit lead Anthony Hauck and Dynamo creator Ian Keough, takes a code-first, cloud-native approach where users compose modular generative “functions” into building systems. It’s closer to programmable BIM than to ChatGPT-for-buildings.

- Finch 3D focuses on residential unit optimization inside a given envelope, with a Grasshopper integration that keeps facades responsive to unit mix changes.

- Maket.ai and Arkdesign.AI are going after the text-to-floorplan category at the affordable end of the market, mostly for residential early-concept work.

- ArchiStar is active in zoning analysis and feasibility.

- ArchiLabs is building a standalone, web-native, code-first parametric CAD platform with Python-first automation.

- SWAPP attacks construction document automation for large residential projects.

- Veras by Chaos and Archicad’s AI Visualizer (Stable Diffusion-based) handle the rendering-from-massing workflow that used to take days of post-production.

- Research projects like ETH Zurich’s work on LLM-based agents for conceptual form-finding, multi-story layout generation, and facade design, or academic frameworks like Text2BIM, are pushing the theoretical frontier.

Notice the pattern. None of these are “type a prompt, receive a building.”

They are all narrow, constrained, rule-bound tools that either operate in the earliest conceptual phases (where precision matters least) or solve a very specific downstream problem (parking layouts, unit optimization, schedule generation, rendering).

The closer you get to signed, stamped, code-compliant construction documents, the less AI is doing on its own.

Even the 2025 AECO Design Slam at Autodesk University — essentially a showcase of the best-case scenario — had teams stitching together Forma, ArchiStar, Finch, MidJourney, Veo3, and Nano Banana to produce a concept for a 2050 Nashville district. The AI was everywhere, and the humans were still everywhere.

Dean Rodolphe el-Khoury of the University of Miami School of Architecture put it well: an AI tool can generate 100 images of a building after receiving the relevant parameters, but it cannot choose the best one.

That choice — shaped by cultural context, client relationships, site history, and professional judgment — remains a human skill.

When is this going to change?

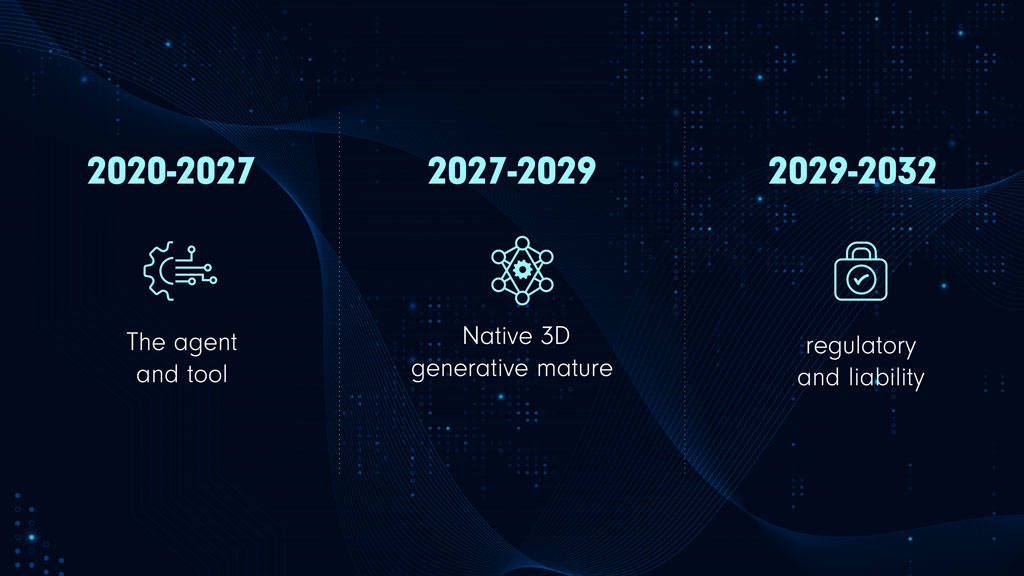

Here’s where we’ll risk a forecast. The disruption will come, but it won’t come from “a bigger LLM.” It will come from three trends converging, roughly on the following timeline.

2026–2027: The agent and tool-use layer matures.

LLMs are already being wrapped in agent frameworks with access to APIs, CAD tools, solvers, and retrieval-augmented generation grounded in real regulations.

Expect to see the first credible “copilots” inside Revit, Rhino, and ArchiCAD that can reliably automate well-defined, repetitive tasks — schedules, renaming, family swaps, basic clash resolution, code compliance pre-checks, and first-pass documentation.

This is where the productivity gains will show up first. Expect 20–40% time savings on documentation-heavy tasks, not “the AI designed the building.”

2027–2029: Native 3D generative models mature.

The real unlock isn’t bigger LLMs; it’s generative models that operate natively on 3D geometry and parametric graphs, the way diffusion models operate on images.

Research on 3D generative models and foundation models for architecture is progressing, and the gap between AI theory and real architectural application has already shrunk from a historical 62 years to about 2.5 years, according to a 2025 systematic review in Automation in Construction.

When a model can propose valid parametric massing options that are simultaneously code-aware, structurally reasonable, and editable in BIM, that will be the moment AEC actually shifts.

2029–2032: Regulatory and liability infrastructure catches up.

Even with the technology ready, nothing ships until the legal and professional frameworks catch up.

The EU’s AI Act already classifies building safety and energy compliance as “high-risk” applications, and California’s SB-1000 requires AI-assisted climate resilience reviews for public projects starting in 2026.

This kind of regulatory pressure is a double-edged sword — it forces adoption but also forces certification, audit trails, and accountability. The firms that win will be the ones whose AI outputs are reviewable, explainable, and integrable with stamped deliverables.

Our rough guess: by 2030, a mid-size architecture firm will do 50–70% of its early-stage massing, feasibility, and documentation work with heavy AI assistance. It will still employ architects, because the job will have shifted — less drafting, more curation, coordination, client-facing judgment, and high-level design direction.

The analogy isn’t “AI replaced architects.” It’s “CAD replaced drafting tables.” The profession didn’t disappear; the tools changed and the productive output per person went up.

The honest takeaway about AI in AEC

If you’re an architect, engineer, or developer reading this and wondering whether to panic: don’t.

The current wave of AI is a genuinely useful productivity tool that is worth learning, worth integrating, and worth budgeting for.

It is not a replacement for your expertise, your license, your judgment, or your relationships.

If you’re a founder or investor looking at AEC: the opportunity is enormous, but the winners will be the teams that deeply understand parametric modeling, BIM interoperability, building codes, and the legal realities of stamped documents.

A generic ChatGPT wrapper pointed at floor plans will not cut it. The companies that are actually getting traction — Forma, TestFit, Hypar, Finch, SWAPP — all have deep AEC DNA, not just AI DNA.

And if you’re just curious about where this is all going: AI will reshape AEC, but on AEC’s timeline, not Silicon Valley’s. Buildings are slow, heavy, regulated, and real. The software that runs them will be, too.

At e-verse, we build tools that sit exactly at this intersection of AEC and software. If the problems in this post sound like ones you’re wrestling with in your own practice, we’d love to talk.

Valentin Noves

I'm a versatile leader with broad exposure to projects and procedures and an in-depth understanding of technology services/product development. I have a tremendous passion for working in teams driven to provide remarkable software development services that disrupt the status quo. I am a creative problem solver who is equally comfortable rolling up my sleeves or leading teams with a make-it-happen attitude.