Forge Token Service Deployment with Serverless

Introduction

We have multiple projects which start from a Forge Viewer implementation and of course, a viewable token creation to access the models.

Every single one of the projects needs a service handling the token creation deployed with the client’s credentials and different environments.

The simplest solution for us is to use a serverless service to handle the process allowing us to scale and integrate with other products.

Upload a zip file and copy the code to the provider’s console, using Terraform or CloudFormation., There are several ways to deploy a function to a cloud provider.

This month we tested the tools from Serverless Inc, a framework described as “[…] a command-line tool that uses easy and approachable YAML syntax to deploy both your code and cloud infrastructure needed to make tons of serverless application use-cases.” [1]

Sounds cool, no? Serverless allows you to create Lambdas in AWS (or azure, google) with minimal setup code.

You can run it on any instance that allows running NPM (and docker it if you want to do something fancy).

What is AWS Cloudformation?

AWS CloudFormation is a transformative service offered by Amazon Web Services (AWS) that simplifies infrastructure management through code. Users define their cloud resources in templates, which are then automatically provisioned and managed by CloudFormation. This Infrastructure as Code (IAC) approach eliminates manual provisioning, reducing errors and ensuring consistency across deployments. Templates can be versioned, allowing for easy tracking of changes and efficient resource scaling. CloudFormation streamlines resource provisioning, making it efficient for a wide range of applications, from simple web services to complex, multi-tier architectures. It also enhances security by consistently applying best practices and simplifies cost management. As cloud technology continues to shape the IT landscape, AWS CloudFormation remains a vital tool for businesses seeking to automate and optimize their infrastructure deployments, ultimately fostering efficiency and agility in the cloud.

What is Terraform?

Terraform, developed by HashiCorp, is a game-changing open-source Infrastructure as Code (IAC) tool that revolutionizes cloud resource management. It allows users to define and manage their desired infrastructure using code written in HashiCorp Configuration Language (HCL) or JSON. Terraform’s unique approach operates on the principle of infrastructure as code, enabling users to declare their infrastructure configurations and leaving the tool to handle resource provisioning and management across various cloud providers. Terraform simplifies complex tasks by generating execution plans, applying changes, and maintaining a state file to track resource states. Its multi-cloud support, declarative syntax, scalability, rich ecosystem, and version control capabilities make it a go-to choice for automating infrastructure provisioning, network design, and application management in the era of cloud computing. DevOps teams and organizations of all sizes find Terraform invaluable for achieving infrastructure agility, reducing manual tasks, and enhancing productivity in today’s dynamic cloud-centric landscape.

Why do we test it?

We like it because:

- It’s easy to use; install, 1 yml file, 3 commands, and that’s it.

- It supports Node & Python between other languages.

- We can deploy big libraries without problem (using docker).

- It handles all the roles and permissions for us.

- Allows integration tests.

Hands-on example

First, we need to install serverless globally to use it in all projects.

npm install -g serverless

Create a template (in this case a python3 project).

serverless create --template aws-python3 --name everse-serverless-test --path everse-serverless-test

Let’s access the project, create and activate a new environment. We need to install also one of its plugins serverless-python-requirements to handle imported libraries.

cd everse.serverless.test/

npm init -y

npm install --save serverless-python-requirements

virtualenv venv --python=python3

.\\venv\\Scripts\\activate.ps1

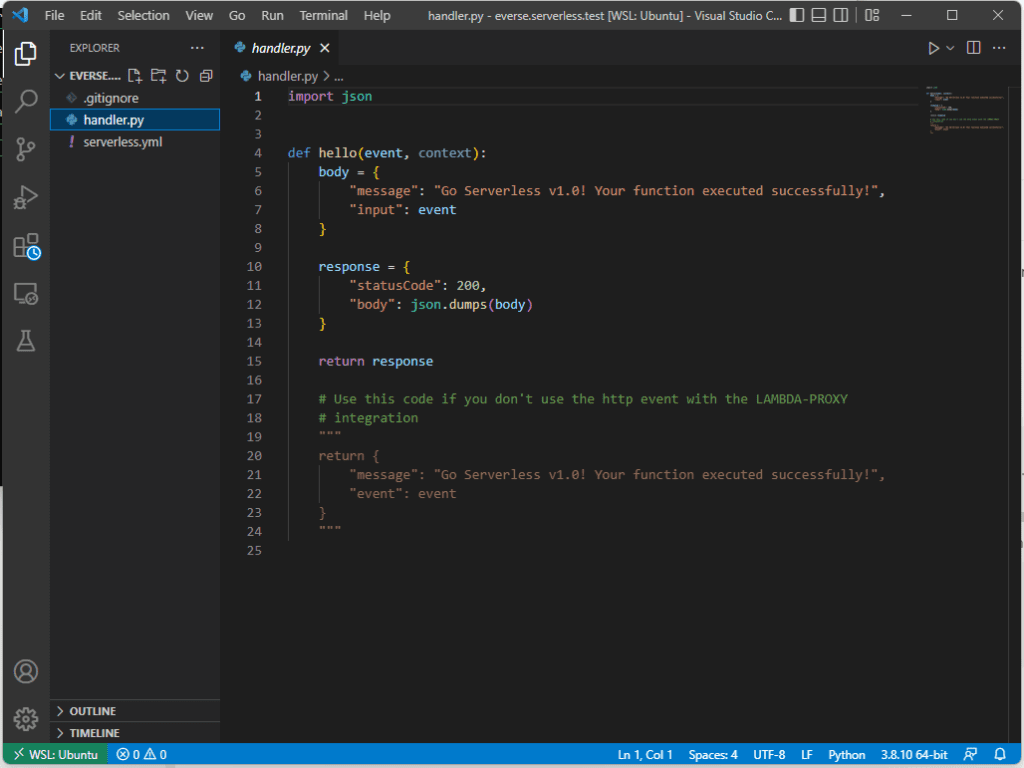

To check what we have created, open VS Code.

code .

You should see this!

Add the business to the function

Let’s modify the lambda_function.py file to return a valid token to access models on a Forge Viewer.

import json

import os

import requests

d_ulr = "https://developer.api.autodesk.com/"

client_id = os.environ["client_id"]

client_secret = os.environ["client_secret"]

def get_token(event, context):

url = f"{d_ulr}authentication/v1/authenticate"

payload = f"grant_type=client_credentials&client_id

={client_id}&client_secret={client_secret}&scope=viewables:read"

headers = {"Content-Type": "application/x-www-form-urlencoded"}

response = requests.request("POST", url, headers=headers, data=payload)

return json.loads(response.text)

if __name__ == "__main__": # To test the script locally

print(get_token(None,None))

⚠️ Note: Lambda functions can only receive and send JSON structures. Have this in mind if you want to return blobs. You need to convert them to base64 and set the content-type of the response to the right type.

Setting the deploy

You may notice a yaml file in the folder structure named serverless.yml.

We need to replace the example name with the function we want to call and add the environment variables to be set in AWS.

service: everse-serverless-test

frameworkVersion: '3'

provider:

name: was

runtime: python3.8

stage: dev

region: us-east-1

functions:

lambda_function:

handler: lambda_function.get_token

environment:

client_id : "forge_client_id"

client_secret : "forge_client_secret"

timeout: 60 #typically no need to change the default 6 seconds timeout

Note the handler name is the python script name + the function we want to run from that script. We added a timeout override to make the function run a max of a minute.

Add support for pip libraries

If you want to use libraries such as requests, pandas, numpy, etc, you need to include them to be uploaded to AWS.

To do this, we define the plugin serverless-python-requirements which will take of the extra packages. To make this work on AWS we define pythonRequirements to use dockerizePip: non-linux.

Other options may not work depending on the version of Linux we are using.

plugins:

- serverless-python-requirements

custom:

pythonRequirements:

dockerizePip: non-linux

Freeze the requirements to a text file so they will be included in the docker image.

It’s important to have the environment activated. If not, pip freeze will pick up all the packages you have installed on your computer.

pip freeze > requirements.txt

Ignore project folders

Remove dependencies files that will be downloaded when deployed to reduce the size of the payload.

package:

exclude:

- node_modules/**

- venv/**

Deploying

We are ready to go now. Serverless has everything it needs to create the lambda function in your AWS account.

serverless deploy

Out of the box, Serverless will use your AWS credentials stored in your /.aws/credentials in your user folder. You can use different credentials

serverless deploy --aws-profile your_profile_name

Let’s test it!

Let’s test it and see what we receive. Here you may find more options for testing.

pablo@pc:/home/pablo/everse.serverless.test$ serverless invoke --function lambda_function

Running "serverless" from node_modules

{

"access_token": "NewToken",

"token_type": "Bearer",

"expires_in": 3599

}

Conclusion

In the next weeks, we will continue talking about implementations using this framework using S3 buckets, schedule triggers, and GitHub actions handling the CI/CD of the infrastructure

We think it’s a great match for simple projects like the one in the example, where the scope is simple and the time to market is important.

The framework allows adding more resources and permissions but none is needed by default.

We found Serverless framework easier than other options such as Terraform or CloudFormation because it handles the creation of roles, permissions, and stack out-of-the-box.

References

Sample code

Pablo Derendinger

https://www.e-verse.comI'm an Architect who decided to make his life easier by coding. Curious by nature, I approach challenges armed with lateral thinking and a few humble programming skills. Love to work with passioned people and push the boundaries of the industry. Bring me your problems/Impossible is possible, but it takes more time.